What is retrieval-augmented generation (RAG)?

LLMs typically excel at providing rapid responses to general knowledge inquiries because they are trained on vast arrays of base data. To adapt these pre-trained models for more specific business applications, organizations will often leverage additional data sources to provide deeper knowledge and important supporting context.

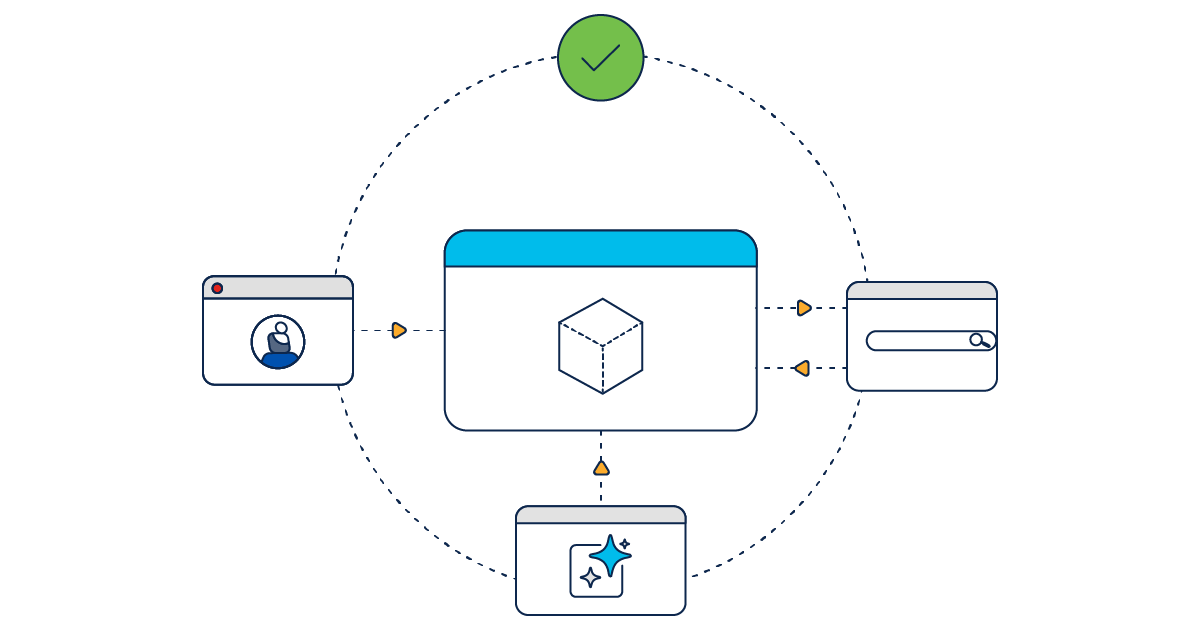

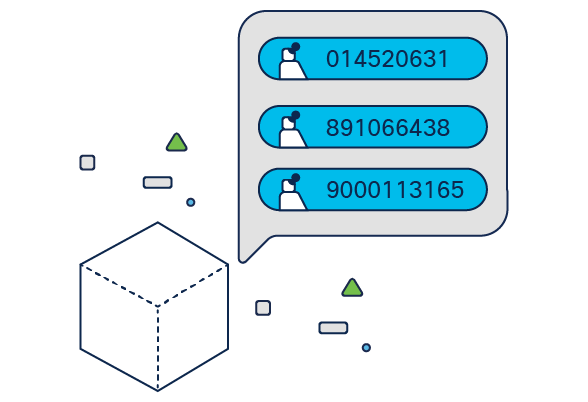

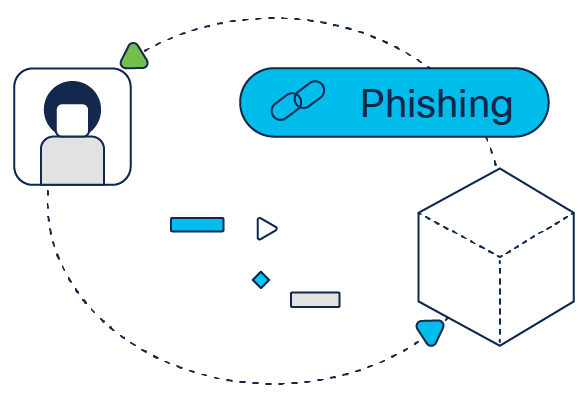

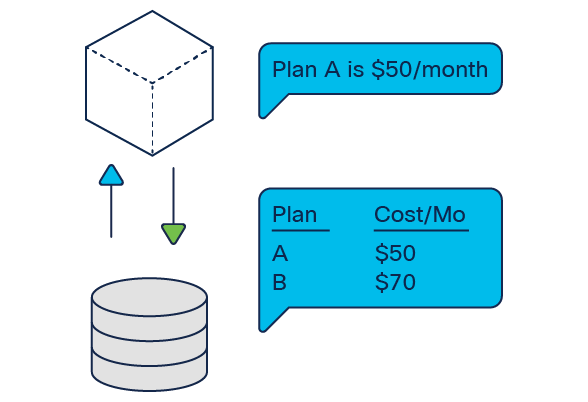

RAG is the most common technique used to enrich AI applications with relevant information by connecting LLMs to additional data sources. Vector databases enable RAG applications to leverage both structured and unstructured data such as text files, spreadsheets, and code. Depending on the context necessary, these datasets may include external resources or internal repositories with sensitive customer and business information.

Compared to fine-tuning, RAG is often a faster, more flexible, and more cost-effective option for adapting a LLM for specific purposes.